The answer is no, at least not yet.

Artificial Intelligence (AI) tools help automate literature screening, data extraction and evidence synthesis, but they remain just that, tools, and cannot replace the experts using them. The difference particularly matters when the output feeds a regulatory dossier, an HTA committee presentation or a public health policy decision.

Large Language models (LLM) can write fluently, producing text that reads as authoritative, even when the underlying reasoning is wrong or the cited evidence does not even exist. In a systematic review, this can result in disastrous consequences, specially in pharmacoepidemiology and real-world evidence reviews, where study designs vary widely and confounding is a constant challenge.

A PICO question does not exist in isolation, and the relevance of a given study depends on the therapeutic area, the regulatory context, the existing evidence base and even the track record of a particular health authority. A LLM cannot weigh those factors (yet). A senior epidemiologist with EMA submissions or NICE appraisals experience can. These experts will immediately spot that randomisation was inadequate, that the comparator was poorly chosen, or that follow-up was too short to capture the outcome of interest. This is not pattern recognition, but years of clinical and methodological experience applied to a specific scientific question.

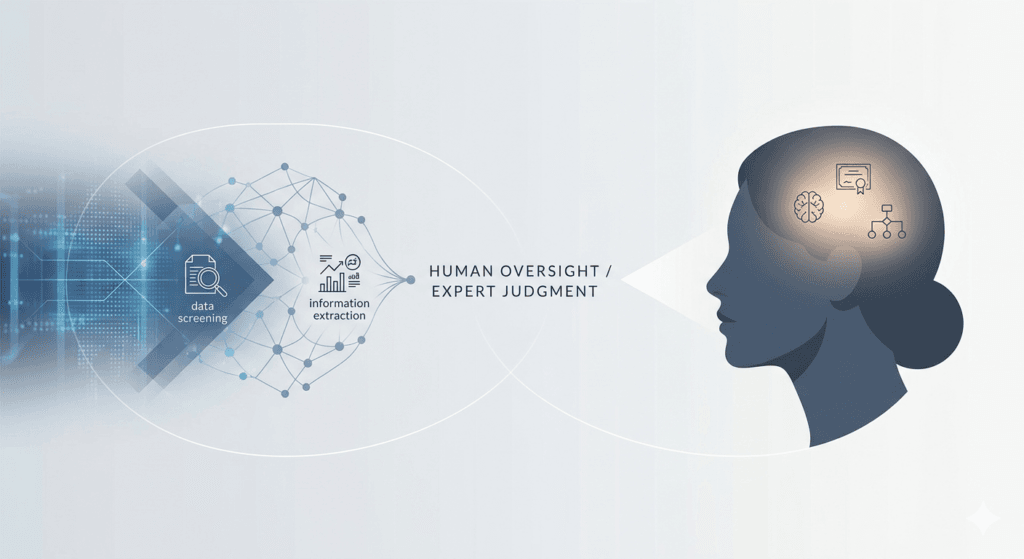

AI tools do add genuine value for searching the literature, screening titles and abstracts, and extracting data. These tasks used to take weeks and now take days, translating into a great efficiency gain that reduces costs for the contractors. However, expert judgment cannot be skipped for risk of bias assessment, evidence synthesis and interpretation.

AI and human expertise are not in competition, they need to work together. The key is knowing which decisions require a person, and which ones do not.

© epiSphera, 2026. Licensed under CC BY 4.0.